The Shape of Data: Intrinsic Distance for Data Distributions

Anton Tsitsulin, Marina Munkhoeva, Davide Mottin, Panagiotis Karras, Alex Bronstein, Ivan Oseledets, Emmanuel Mueller

ICLR 2020

TL;DR

We propose a metric for comparing data distributions based on their geometry while not relying on any positional information.

In this paper:

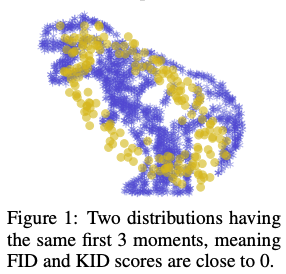

- We start out from the observation that models capturing the multi-scale nature of the data manifold by utilizing higher distribution moment matching perform consistently better than their single-scale;

- We propose IMD, an Intrinsic Multi-scale Distance that is able to compare distributions using only intrinsic information about the data;

- We provide an efficient approximation thereof that renders computational complexity nearly linear;

- We empirically demonstrate that IMD effectively quantifies change in model representations;

- We use IMD to assess the sample quality of GANs and provide reliable insights into the layer-wise output dynamics of neural networks

Share this post

Reddit

LinkedIn

Email